Chirpanalytica: "Give me your Twitter name and I'll tell you which party you vote for"

What if you could automatically determine a person's political orientation just from a Twitter account? That's exactly the task I've been working on for the past two years.

Update: Unfortunately, the project was put into premature hibernation in mid-2023 due to API changes after the takeover. More about the end of Chirpanalytica can be found in the corresponding farewell post.

Below is the unchanged post from the release of Chirpanalytica:

Using the last 100 tweets of an account to determine which political party the person behind the user account is closest to - a simple idea that I've been working on for almost two years.

Countless hours of work and the help of many different people later, I'm presenting Chirpanalytica - the Wahl-O-Mat for Twitter accounts.

A backstory

Even though I've been working on the project for almost two years now, the idea behind it is much older than that: At Jugend hackt 2017 in Frankfurt am Main, the "Profil-O-Mat" was born over the course of a weekend. Even though I wasn't a direct part of the project group (I did help a bit with the frontend, but I didn't have the technical understanding to really contribute properly and therefore preferred working on a Twitter mini-game), I was already fascinated by the idea and the accuracy achieved in just one weekend.

After the Profil-O-Mat went offline shortly after the hackathon (as unfortunately happens with many hackathon projects, since the computing power becomes too expensive in the long run), I decided to bring the project idea back to life.

Unfortunately, when the project was taken offline, the GitHub repository and all other project parts were deleted as well, so only the parts of the project that I had helped with remained. On top of that came the Twitter API update to version 2, which introduced rate limits and made the project's continued existence even more difficult.

Still, I got to work creating a concept for the newly christened Chirpanalytica project (the name is a tongue-in-cheek reference to the Facebook Cambride Analytica scandal) - without knowing how much work was ahead of me.

We need data, boss!

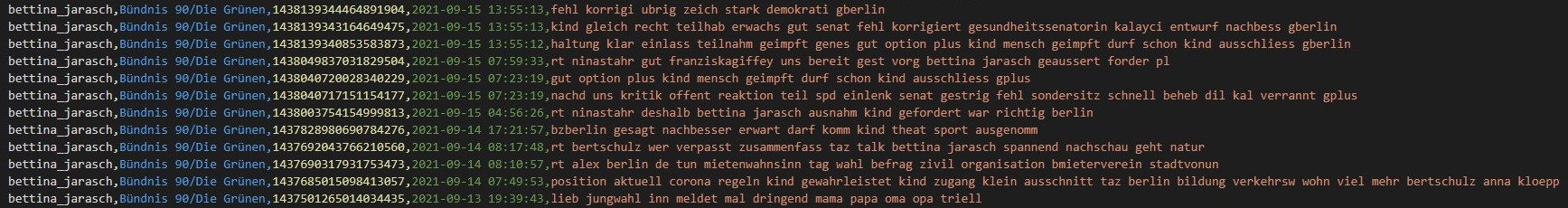

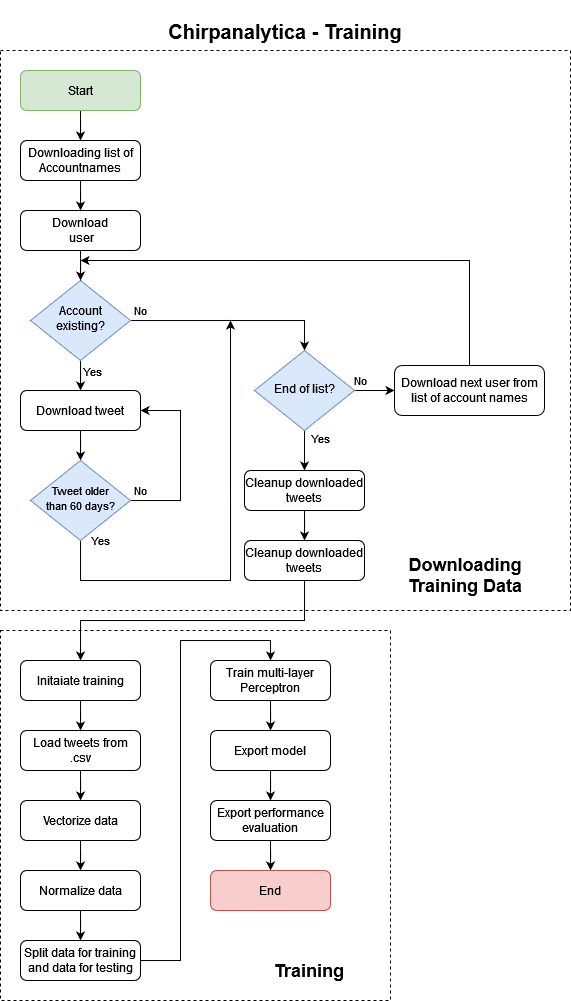

The first task in the project was to generate a training dataset for the neural network. The goal was to collect more than 7,000 tweets from various parties (SPD, Union, FDP, AfD, DIE LINKE, Bündnis 90/Die Grünen, and the Pirate Party), fully automatable and as clearly structured as possible.

I started by thinking about how I could even get the names of the Twitter accounts. After trying out all kinds of ideas, from a manual list to a scraping tool for the members overview of the German Bundestag, I was pointed toward a very exciting idea: On Wikidata you can build queries yourself and make it easy to retrieve specific properties of people.

After a whole lot of trial and error and help from Martin (more on that in the acknowledgements at the very bottom), I came up with a query function that allowed all Twitter accounts of German politicians from the parties mentioned above that are listed on Wikidata to be downloaded:

Using this query, I received a .csv via a cURL request with the necessary data to read the people into a Python script. That allowed me to start dealing with downloading the actual tweets. After many, many hours of work (mostly because of library issues, since many still couldn't properly handle Twitter API version 2), I arrived at a reasonably satisfactory result that fulfilled the data download function. The biggest disadvantage of the implementation in this result via Tweepy was - and still is - the very time-consuming execution: downloading the last 200 tweets from 900 accounts takes the downloader more than 21 hours. Still, the result is very rewarding: 70,000 tweets in one file, neatly compiled as comma-separated values with account name and corresponding parliamentary group.

Practice makes perfect

With this dataset, it was time to train the project dataset. After initial attempts in the framework TensorFlow, I realized that it wasn't really suitable for the use case of neural text processing. In hindsight, I was probably a bit too quickly intimidated by the huge documentation, because there actually are very promising projects on TensorFlow and NLP (Neuronal Language Processing).

After TensorFlow, I experimented a bit with Pytorch, but quickly moved on from there to scikit-learn: this well-known and extensive library in turn builds on the NumPy and SciPy libraries, so getting started wasn't too complex with a bit of NumPy experience.

Over a few weekends, with a little initial help from various examples and leftovers from the "Profil-O-Mat[s]," I was able to get the training running again. I won't go too deeply into the topic of deep learning here (maybe that'll be a separate blog post sometime), but broadly speaking the data is first prepared and split before the multilayer perceptron classifier (Germanized from Multi-layer Perceptron classifier) does its work and then tests itself against itself. At the end of the hours-long training phase, the exported network and the performance evaluation are available.

During the time I was working on training the neural network, Torben, one of the project collaborators from back then and a friend of mine, joined the project and directly helped me with properly preparing the data: because of an incorrect matrix conversion, I had the problem of creating a separate party for each tweet and thus causing quite a bit of chaos during training.

By February 2021, the training was working again and I was able to analyze the first sentences.

▼(Click) Example performance evaluation

Evaluating performance...

Training error: 0.05285329139519209

Test error: 0.43669961806335655

Training data:

precision recall f1-score support

Freie Demokratische Partei 0.94 0.99 0.96 3240

Alternative für Deutschland 0.95 0.78 0.86 1310

Christlich Demokratische Union Deutschlands 0.94 0.98 0.96 6021

Piratenpartei Deutschland 0.85 0.76 0.81 1607

Sozialdemokratische Partei Deutschlands 0.91 0.98 0.95 7102

Die Linke 0.99 0.91 0.95 3723

Bündnis 90/Die Grünen 0.97 0.96 0.96 12605

accuracy 0.95 35608

macro avg 0.94 0.91 0.92 35608

weighted avg 0.95 0.95 0.95 35608

Test data:

precision recall f1-score support

Freie Demokratische Partei 0.51 0.45 0.48 1606

Alternative für Deutschland 0.38 0.35 0.36 679

Christlich Demokratische Union Deutschlands 0.55 0.60 0.57 3009

Piratenpartei Deutschland 0.25 0.25 0.25 863

Sozialdemokratische Partei Deutschlands 0.50 0.55 0.52 3434

Die Linke 0.57 0.49 0.52 1889

Bündnis 90/Die Grünen 0.69 0.67 0.68 6324

accuracy 0.56 17804

macro avg 0.49 0.48 0.48 17804

weighted avg 0.56 0.56 0.56 17804

Frontend and Twitter bots, or: The crunch before the 2021 federal election

After the trained network was ready at the end of February, I first had to move the project down my priority list for a few months: alongside competitions and travel, I had little time to continue working on Chirpanalytica.

After the summer holidays, however, I got back into the project, and this time with more drive than ever: the upcoming federal election gave me a fixed "deadline" by which I really wanted to release version 1.

So I began steering the project toward the finish line: first building a Python script for account prediction, then getting a web server running again that, after being given the desired user account via query string, returned the party matches as JSON.

Next, the frontend had to be built and tested, so I created a logo and took care of the Twitter bot.

I wrote this Twitter bot in Python during an all-nighter. The Chirpbot (as it was christened) is completely separate from the backend of the Chirpanalytica project and runs independently as a service on my server.

This led to quite a lot of progress on the project in two weeks; I worked around 5-7 hours a day on finishing Chirpanalytica. In all those hours, there were both very pleasant experiences and nerve-racking web browser problems. CORS requests (Cross-Origin Resource Sharing) cost me sweat and tears, so working on the following GIF was a welcome change of pace:

Your tweets match the following parties this much:

⬛ CDU: 10.7%

🟥 SPD: 22.0%

🟨 FDP: 11.6%

🟥 Die Linke: 4.2%

🟩 Die Grünen: 32.0%

🟧 Piratenpartei: 17.5%

🟦 AfD: 2.1%

In total, we analyzed 49 of your tweets.September 23, 2021

Last but not least, Torben helped me a great deal once again by supporting me in cleaning up the code and making the frontend responsive. That meant that in the week before the federal election, Chirpanalytica was finally ready for use in version 1.0.

The result: Fly, bird, fly!

After many all-nighters (this post was written during one too), I'm happy to finally present Chirpanalytica: by entering a Twitter username on https://de.chirpanalytica.com/, by mentioning @chirpanalytica on Twitter, or by using the window below this paragraph, you can very easily get the data on the parties that most closely match the user's political orientation. It's important that the Twitter account writes content in German and is as active as possible.

And now: Have fun with Chirpanalytica!

Acknowledgements

I owe it to the people without whom this project would not have been possible in this form to say thank you:

First and foremost, the Jugend hackt project group behind the "Profi-O-Mat[s]" from Jugend hackt Frankfurt am Main 2017, who provided a great proof of concept and a basic structure for the project: Adrian, Levy, Bela, Niklas, Philipp and Torben.

I can thank Torben once again right away: without his collaboration on Chiranalytica, it would not have been possible to get the project running. Thank you very much, Torben!

I'd also like to thank Martin for the help in creating the Wikidata query to obtain the politicians' Twitter accounts.